Work

Work

Most AI consultants show you slide decks of frameworks they've never deployed. The work below is real — live systems handling live data, live users, and the kind of integration complexity that doesn't survive contact with a slide deck.

Three current case studies plus a portfolio piece. Each illustrates a different facet of the five-pillar method.

Two integrated production applications — an agent-facing helpdesk and a customer-facing portal — sharing one database, one design system, and a real Claude-powered AI layer that surfaces relevant past resolutions and converses with customers before handing off to a human.

Customer support tickets arriving through HubSpot were piling up. Agents were re-solving problems other agents had already resolved. Customers had no self-service portal to track their own tickets or submit new ones, and email threads were the only audit trail.

The team didn't need another monolithic SaaS helpdesk. They needed something integrated with their existing HubSpot data, branded to their identity, and architected to use AI where AI actually adds value — not as a chatbot gimmick.

Two applications sharing a single MySQL database. The agent helpdesk handles ticket triage, replies, internal notes, and reporting. The customer portal lets end users submit and track tickets, with strict per-action visibility controls so internal notes never leak.

Microsoft 365 SSO for both staff and customers. Email-domain auto-provisioning — if a domain is whitelisted, a customer's first sign-in auto-creates their account against the right company.

As a customer types a new ticket, Claude Haiku extracts distinctive technical keywords. A debounced FULLTEXT search runs against resolved tickets. Snippets are auto-anonymized — emails, phone numbers, and greetings stripped — before they're shown.

A floating chat agent on every portal page runs a tool-use loop. The model calls search_past_resolutions to find known fixes and list_my_open_tickets to detect related issues, then asks targeted clarifying questions and hands off to a real ticket with full conversation history when human help is needed.

On the staff side, an AI panel summarizes ticket threads, drafts replies in the team's voice, and supports free-form Q&A about the ticket. Configurable model routing — Haiku for routine work, Sonnet for nuanced cases.

Direct Anthropic API integration rather than a framework wrapper. Reason: every framework we evaluated added a hard timeout we couldn't override, and the client's network had occasional 60+ second egress to the API. Calling the API directly with our own timeout headroom is more reliable than depending on someone else's HTTP layer abstraction. This is the kind of decision that shows up only after the system is in production — not during architecture review.

Visibility was treated as a first-class problem from day one. Every activity row carries a visible_to_customer flag. The agent UI surfaces this as a checkbox on every reply and note — checked by default for outbound replies, unchecked by default for internal notes. No accidents, no leaked internal commentary.

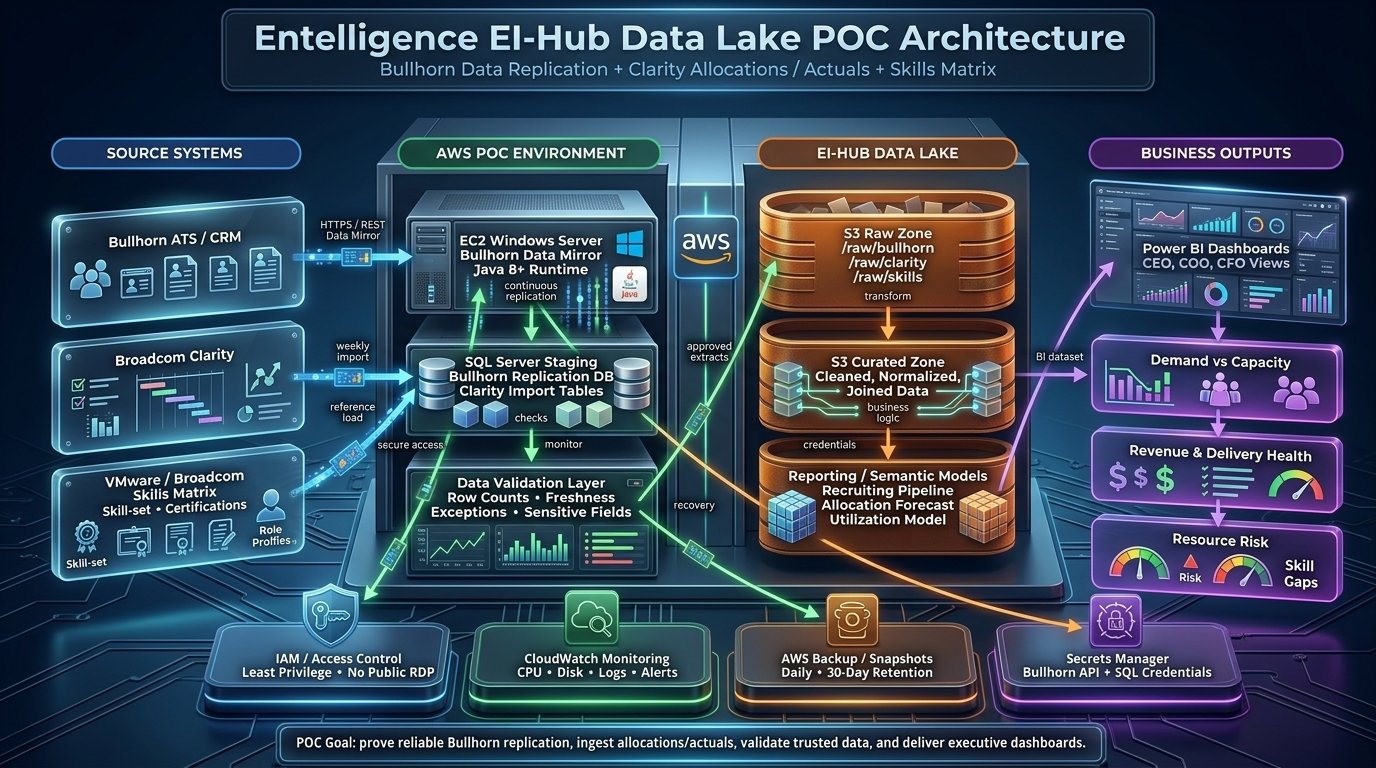

An AWS data lake unifying recruiting, resource allocation, and skills data into trusted executive dashboards — the kind of foundational data architecture that determines whether downstream AI initiatives are even possible.

Source systems on the left, AWS POC environment in the middle, curated data lake and business outputs on the right.

Three critical source systems — Bullhorn ATS for recruiting, Broadcom Clarity for resource allocations and actuals, and a VMware/Broadcom skills matrix — lived in separate silos. Executives needed unified views (demand vs capacity, revenue and delivery health, resource risk and skill gaps) but every report was hand-stitched.

Before AI could ever apply, the data foundation had to be trustworthy, queryable, and continuously refreshed. That's the work most AI projects skip and pay for later.

A POC environment proving reliable Bullhorn replication via REST data mirroring into an EC2-hosted SQL Server staging layer. Weekly Clarity imports and reference loads from the skills matrix. A data validation layer (row counts, freshness checks, sensitive-field flags) running before anything moves downstream.

Approved extracts then flow through S3 raw and curated zones into semantic models for recruiting pipeline analysis, allocation forecasting, and utilization. Power BI dashboards deliver CEO, COO, and CFO views built on trusted data — not screenshot of a query someone ran last Tuesday.

Most AI failures aren't model failures — they're data failures. A data lake with clean lineage, freshness monitoring, governance, and validation is the substrate every AI use case downstream depends on. EI-Hub is the foundation we'll layer demand prediction, skills-matching automation, and natural-language reporting on top of next. Doing it in this order is how AI initiatives actually ship.

An AI-powered youth sports development platform combining BLE sensor integration, on-device motion processing, real-time swing scoring, and coach dashboards. Mission: bring elite-level training and personalized AI feedback to underserved communities where coaching access has historically been the barrier.

Roughly 70% of kids drop out of organized sports by age 13. Elite instruction is expensive and geographically concentrated. Underserved communities are systematically excluded from skill development that could change a young athlete's trajectory.

Fore The Youth's mission is to close that gap by giving every young athlete access to AI-augmented training that adapts to their skill level and learning style — without requiring an expensive private coach.

A complete product stack from BLE sensor to coach dashboard. A Mbient MetaMotionRL inertial sensor mounts on a club, streams 100Hz gyroscope and accelerometer data to a Flutter app, which runs the entire signal-processing and scoring pipeline on-device. No raw frames ever leave the phone.

Coaches see student progress, drill performance, leaderboards, and session histories. Students get real-time feedback on tempo, swing path, smoothness, and consistency, plus achievements designed to keep adolescent attention engaged.

A state machine running at 100Hz transitions through address, takeaway, backswing, transition, downswing, impact, and complete — using calibrated thresholds derived during a per-session three-step calibration sequence.

Per-swing metrics (tempo ratio, peak velocity, jerk-penalized path score, second-derivative smoothness, consistency-vs-recent variance) compute on-device. A weighted composite produces a 0-100 total score with plain-language coaching feedback.

Sessions persist to local SQLite first and sync to the backend when network returns. BLE disconnect-recovery preserves completed swings and resumes after reconnection. A youth program can't depend on perfect WiFi.

The on-device-only processing isn't just an architecture preference — it's a privacy decision. Personal motion data and student identifiers never travel as raw frames. Only computed, anonymizable metrics reach the backend. This matters enormously for a platform serving minors.

An earlier AI golf coaching system that pioneered the sensor + computer vision + analytics stack now refined in Fore The Youth. Demonstrated end-to-end AI architecture from sensor to scorecard OCR to coaching dashboard.

OpenCV pipeline converting handwritten paper scorecards into structured tournament data — the same techniques that transfer to invoice extraction, claim forms, and contract parsing.

Wearable IMU sensor capture, calibration, and biomechanics analysis — foundation for the FTY Smart Swing platform.

AdminLTE-based operational dashboards surfacing player performance, swing analysis, and coaching recommendations.

Start with the free assessment to give us shared context, or email Terry directly to set up a discovery call.